If you've ever asked an AI a complex question and gotten an answer that was confidently wrong, you've experienced what happens when a language model skips its reasoning process. The model jumps straight to a conclusion without working through the logic, and the result looks polished but falls apart under scrutiny. Chain-of-thought prompting is the technique that fixes this. It's one of the most well-researched and practically effective tools in prompt engineering, and once you understand how it works, it changes the way you use AI entirely.

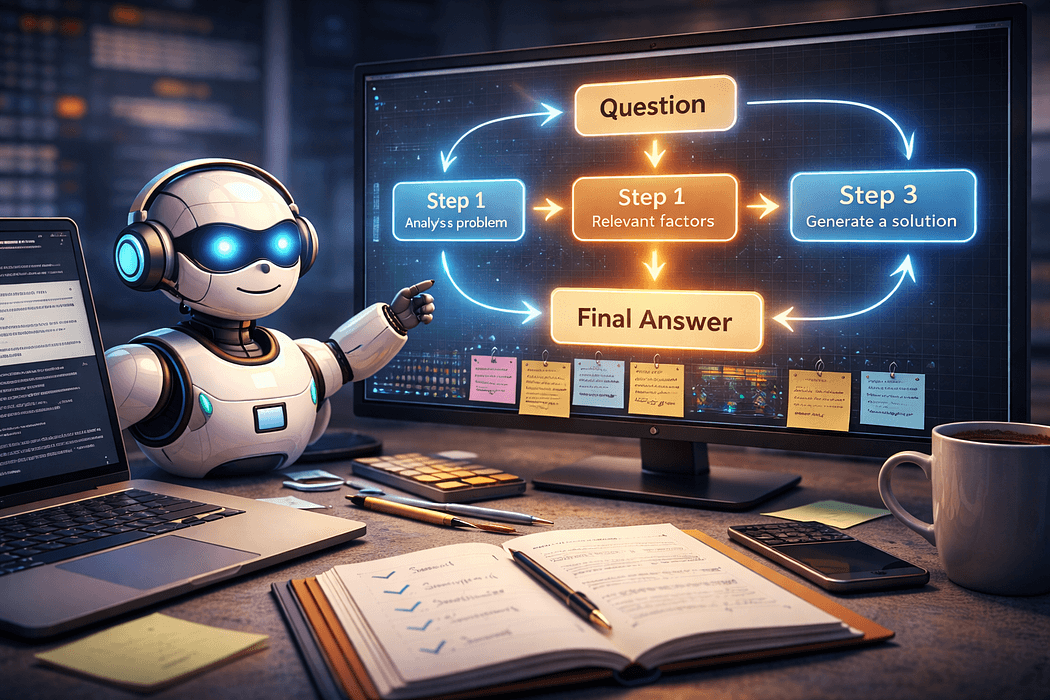

Chain-of-thought prompting is a technique that instructs a large language model to reason through a problem step by step before delivering its final answer. Instead of jumping directly to a conclusion, the model is guided to show its work, breaking down the problem, working through intermediate steps, and arriving at a response that reflects genuine logical processing rather than pattern-matched surface output.

The term was introduced in a 2022 research paper by Google Brain researchers Wei et al., who demonstrated that simply adding the phrase "let's think step by step" to a prompt dramatically improved performance on reasoning tasks, math problems, and logical inference. The finding was striking because it required no additional training, just a change in how the prompt was written.

In 2026, chain-of-thought prompting has become a foundational technique across generative AI applications, from enterprise decision-support tools to consumer AI assistants.

To understand why chain-of-thought prompting improves AI accuracy, it helps to understand how language models generate responses. LLMs predict the most probable next token based on everything that came before it in the sequence. When you ask a complex question and expect an immediate answer, the model is essentially trying to predict the correct final output in one leap, without the intermediate context that would normally constrain it toward accuracy.

By prompting the model to reason step by step, you're changing the structure of what comes before each prediction. Each reasoning step becomes new context that makes the subsequent step more accurate. The model is effectively building a scaffold of logic that constrains it toward a correct conclusion rather than the most statistically probable-sounding one.

This is why chain-of-thought prompting is particularly powerful for tasks involving multi-step math, causal reasoning, comparative analysis, and any scenario where intermediate logic matters.

There are two main ways to apply chain-of-thought prompting, and knowing when to use each is an important practical skill.

Zero-shot chain-of-thought is the simpler approach. You don't provide any examples, you simply add a reasoning instruction to your prompt. Phrases like "think through this step by step," "reason through this carefully before answering," or "explain your reasoning before giving your final answer" are all effective triggers. This works well for most reasoning tasks and is the best starting point when you're working with a new problem type.

Few-shot chain-of-thought involves providing one or more worked examples within the prompt, showing the model exactly what the reasoning process should look like before asking it to apply that process to a new problem. This approach is more effort to set up but produces more consistent results for specialized tasks, complex domain-specific reasoning, or cases where the model's default reasoning pattern doesn't match what you need.

The mechanics are simpler than most people expect. What separates a good chain-of-thought prompt from a weak one is specificity, structure, and the clarity of what you're asking the model to reason about.

A weak prompt: "Is this business plan viable?"

A strong chain-of-thought prompt: "You are a senior business strategist with 15 years of experience evaluating early-stage ventures. Review the following business plan. First, identify the core value proposition and assess its market fit. Second, analyze the financial assumptions and flag any that seem unrealistic. Third, evaluate the competitive landscape and note key risks. Finally, give your overall assessment of viability with a clear rationale. Think through each step before reaching your conclusion."

The stronger version does several things at once. It assigns a role (role prompting), breaks the reasoning into defined stages, specifies what to look for at each stage, and explicitly asks for reasoning before conclusion. Each of those additions contributes to a more accurate, more useful output.

Role prompting and chain-of-thought prompting are the two most powerful individual techniques in the prompt engineer's toolkit, and they're even more effective together.

Role prompting assigns the model a specific identity or expertise, which shapes the vocabulary, depth, and assumptions in its responses. When you combine this with chain-of-thought instructions, you get structured reasoning filtered through a specific expertise lens.

Basic prompt: "What are the risks of combining these two medications?"

Combined prompt: "You are a clinical pharmacist with expertise in drug interactions. A patient is asking about the risks of combining [Medication A] and [Medication B]. Work through your assessment step by step: first identify the mechanism of each drug, then assess known interaction pathways, then evaluate the severity and likelihood of adverse effects, and finally summarize the clinical recommendation. Present your reasoning clearly at each step."

The combined approach produces output that is more accurate, more appropriately hedged, and structured in a way that's actually useful to the reader. The role anchors the domain; the chain-of-thought anchors the logic.

One of the most common mistakes with chain-of-thought prompting is assuming that more reasoning output is always better. Without constraints, models can over-generate, producing elaborate reasoning chains that are verbose, circular, or padded. Adding smart constraints to your chain-of-thought prompts keeps the output focused and usable.

Effective constraints to pair with chain-of-thought prompting include: limiting the number of reasoning steps ("work through this in no more than four steps"), specifying what to include or exclude at each step, setting an output length limit for the final conclusion, and requiring a specific confidence statement at the end ("rate your confidence in this answer from 1 to 10 and explain why").

A well-constrained chain-of-thought prompt produces reasoning that is both rigorous and readable, the goal is precision, not volume.

For production use cases, combining chain-of-thought reasoning with structured output formatting creates AI responses that are both logically sound and practically usable downstream.

Structured output formatting means specifying the exact shape of the response, JSON, labeled sections, tables, numbered conclusions, or any format that makes the output easy to consume. When you combine this with chain-of-thought instructions, you get structured reasoning that can be parsed, stored, or fed into another system without reformatting.

Example: "You are a financial analyst. Analyze the following quarterly earnings report using step-by-step reasoning. Return your output as a JSON object with four keys: reasoning_steps (an array of your analytical steps), key_findings (a list of the three most important takeaways), risk_flags (any concerns identified), and recommendation (a single-sentence conclusion)."

This approach is increasingly standard in enterprise AI workflows where the reasoning output itself needs to be audited or explained, not just the final answer.

Understanding the technique is one thing. Seeing where it creates the most value in practice is what makes it immediately applicable.

In legal and compliance work, chain-of-thought prompts are used to guide AI through contract review, identifying clauses, assessing risk, and flagging areas of concern, step by step, with each reasoning stage documented. In software development, developers use chain-of-thought prompting to get AI to reason through debugging problems systematically rather than generating a guess-and-check answer. In customer support triage, AI systems use structured reasoning chains to categorize issues, assess priority, and route tickets with explainable logic rather than opaque classification.

In content and editorial work, chain-of-thought prompts are used to evaluate drafts against specific criteria, clarity, accuracy, audience fit, with each criterion assessed in a defined sequence before an overall recommendation is made.

The common thread across all of these is that the task involves multiple variables that need to be considered in relation to each other before a conclusion is reached. That's the profile of problems where chain-of-thought prompting delivers the biggest improvement over standard prompting.

The most frequent mistake is using chain-of-thought instructions without clearly defining what the model should reason about. Telling the model to "think step by step" without specifying the relevant dimensions of the problem produces generic reasoning that doesn't add much value over a direct answer.

A second common mistake is applying chain-of-thought prompting to tasks that don't require it. For simple factual lookups, summarization of short texts, or basic formatting tasks, adding a reasoning chain slows down the output and adds noise without improving accuracy. Match the technique to the task complexity.

A third mistake is failing to validate the reasoning chain itself. A confident, well-structured reasoning process can still reach a wrong conclusion. Always evaluate both the quality of the reasoning steps and the accuracy of the final output, especially for high-stakes decisions.

Based on applied use across a wide range of domains, these practices consistently produce the best results when working with chain-of-thought prompting.

Always define the reasoning dimensions explicitly. Vague instructions to "think carefully" are less effective than specifying the steps, criteria, or sequence you want the model to follow. Always pair chain-of-thought instructions with an appropriate role when domain expertise matters. Always include a constraint on the final output, length, format, confidence rating, to keep the conclusion actionable. And always test against edge cases, not just typical inputs, since reasoning failures tend to surface at the boundaries of the problem domain.

Chain-of-thought prompting is not a workaround or a trick. It's a fundamental technique grounded in how language models process and generate information. When applied correctly, paired with role prompting, smart constraints, and structured output formatting, it elevates AI from a pattern-matching text generator to a genuinely useful reasoning partner.

The techniques in this guide work across every major LLM in 2026: Claude, GPT-4o, Gemini, and others. They don't require special tools or API access. They require precision, structure, and a clear understanding of what you're asking the model to reason about. Start with a single complex task you use AI for regularly, apply a structured chain-of-thought prompt, and compare the output to what you were getting before. The difference is usually immediate, and often significant.